Hi there,

I have just recently started having problems with data upload and processing. Below is sample csv file:

Area_2_2026_01_21_edited_to_MA.csv (64.1 KB) (Non-TIC normalized)

Steps leading up to issue:

One-factor analysis, peak intensity, samples in columns.

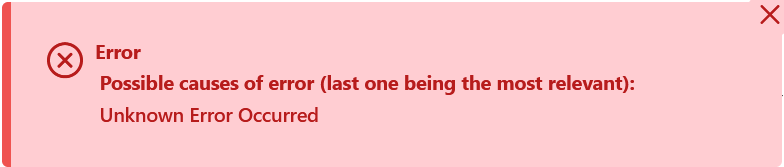

Previously, I was able to use the blank subtraction function, in conjunction with IQR filter, now it is giving this error:

This is problematic because I was relying on using the “standardize by reference feature” to normalize by the “weight” feature in the dataset. I got around this being removed in filter by setting the BLANK weights to 1, which seems fine to me as they will be removed anyways. Unsure if setting the blank weights extremely high to avoid that feature being filtered would lead to problems later on. I would like to avoid entering weights manually if possible, I have many more datasets like this one.

Another thing is that I have only got the drop-down functionality in the normalization menu rarely, is there a cap for the number of features? (I usually just type it in).